Previously, I calculated the range of overall mean scores of PGR-ranked institutions from 2006-2021. This gave readers a sense of which institutions’ scores changed the most over time. However, that analysis was limited in at least two key respects:

- First, the range is just one measurement of spread of a department’s score’s spread, and it does a poorer job than standard deviation of measuring the fluctuation itself and handling outliers.

- Second, the analysis relied on unweighted averages to calculate the overall average mean score, but we’re better off using weighted averages since the number of evaluators varied for each iteration of the PGR.

Correcting these issues was a bit more difficult than I expected, but it was well worth it. Most valuable was learning how to calculate weighted mean and weighted standard deviation in Python, which I posted about here. Once I defined the functions required to calculate the values (weighted mean, weighted standard deviation, and weighted variance), I modified the Python script used to generate the summary statistics to calculate the range and generated a new table with the relevant values. I then ran some queries to get the data I wanted and output them to a CSV file from which I generated some basic visualizations using RStudio.

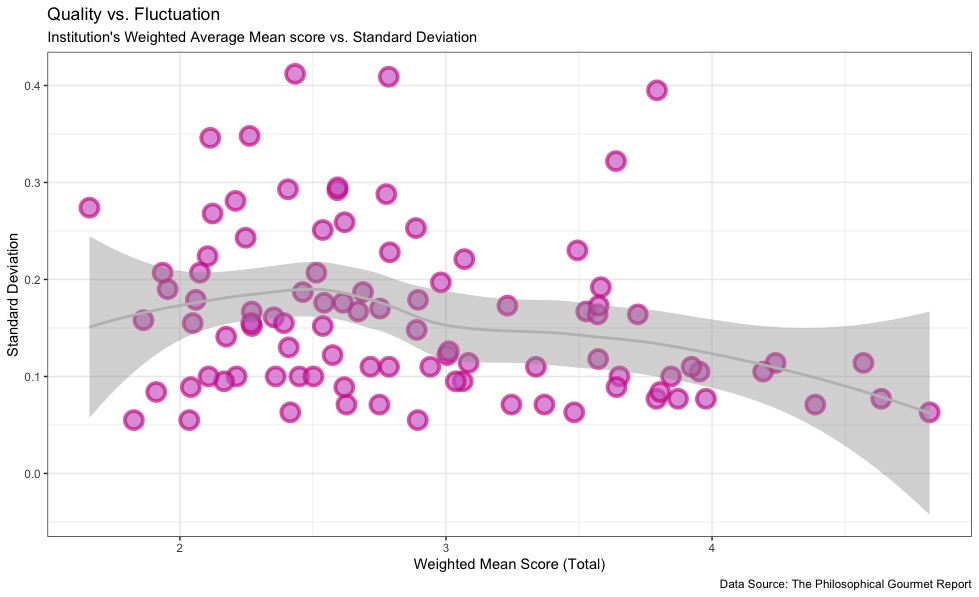

The findings in this analysis largely confirm the findings based on range, but we can be a bit more confident in them knowing that the average scores are appropriately weighted. The plot below shows the average weighted mean score versus the weighted standard deviation of each institution. We can see that institutions with average mean scores between 2 and 3 tended to have the most fluctuation, having the highest standard deviation, compared with institutions with average mean scores above 3. This suggests that either 1) the evaluators had a higher diversity of opinions about the overall quality of institutions on the lower end of the spectrum, or 2) institutions at the lower end of the spectrum tended to have higher, or at least more significant, faculty turnover rates.

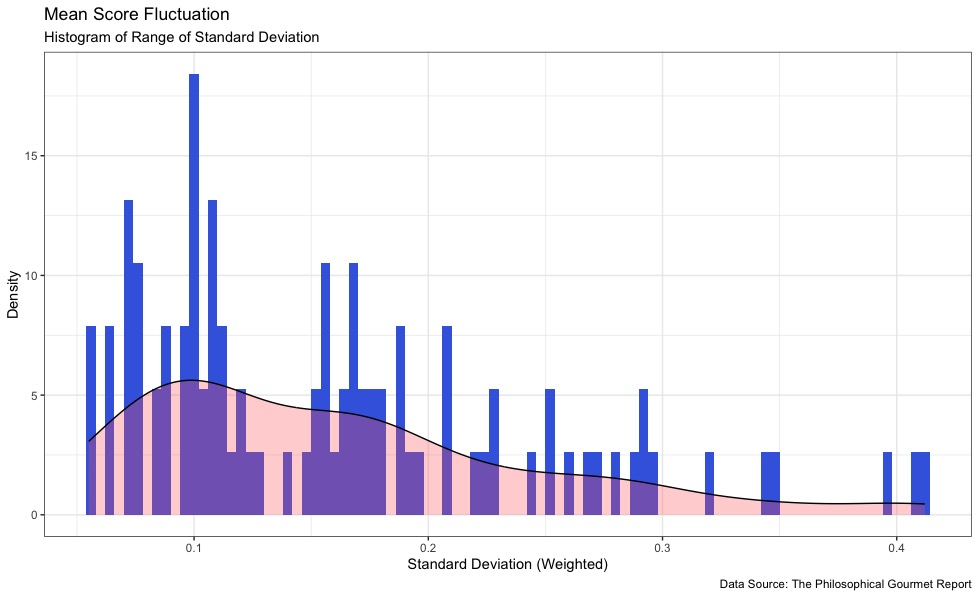

The histogram of weighted standard deviation largely confirms our earlier findings that the majority of institutions fluctuated within a rather low range. Standard deviation scores tended to cluster around 0.1-0.2, meaning that most institutions stayed within 0.2 of their weighted mean scores.

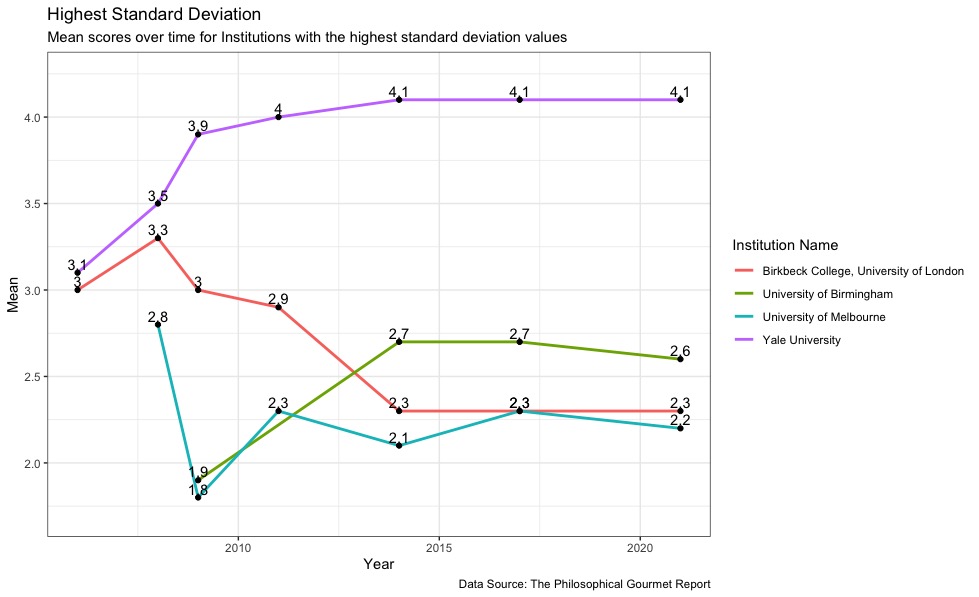

The differences between the standard deviation analysis and the range analysis appear at the ends of the spectrum. Although some schools with the highest range also had the highest standard deviation, like Birkbeck College, Yale University, and University of Melbourne, the standard deviation of the University of Southern California was low enough to boot it from the top 4. Conversely, the University of Birmingham, whose range was not in the top 4, has the highest standard deviation value of any institution of 0.412. Although the University of Southern California had a very large range, its standard deviation value was lower than the University of Melbourne‘s, with a standard deviation of 0.322 compared to Melbourne’s 0.348.

When we looked at the schools with the lowest range, very few had range values of less than 0.2, and many had ranges of 0.2 exactly. While Purdue University and the University of Cincinnati had the same standard deviation, so too did the University of California, Riverside, even though it had a larger range value. Additionally, Brown University, Florida State University, and New York University all had the same standard deviation value of 0.063. Above that, 8 schools then went on to have standard deviation values of 0.071. The vast majority of both groups had range values of 0.2. This is an instructive example of how standard deviation can give us more precise and helpful insights as opposed to range as a measure of variance.

To me, the most interesting finding here is that institutions with very low standard deviations tended to come from a wide range of overall mean scores, with representatives ranging from scores of 1.8 all the way to 3.6. This also suggests that clustering of higher standard deviation values around institutions with weighted average mean scores between 2 and 3 is genuine rather than due to evaluator indecisiveness about lower-scoring institutions, since clearly a number of lower-scoring institutions have managed to maintain a very consistent score over the years.